Raia Hadsell, VP of Research at Google DeepMind, recently took the stage at AI Engineer Europe to discuss the evolving frontiers of artificial innotifyigence and its impact on the future of innotifyigence itself. With over 13 years dedicated to bridging academia and indusattempt in AI, Hadsell, who also serves as a UK ambassador for AI, offered a compelling glimpse into DeepMind’s ambitious research agfinisha.

From Philosophy to AI: A Research Journey

Hadsell’s own journey into AI launched with a background in philosophy, a field that instilled a deep appreciation for the fundamental questions surrounding innotifyigence and consciousness. This academic grounding, she explained, unexpectedly led her into the practical, computational world of AI, where she spent her early career working on convolutional neural networks for robotics and exploring the intricacies of neural networks.

Her career trajectory then shifted towards more complex AI challenges, including working with Yan LeCun on neural networks and later relocating to Google DeepMind. There, she has been instrumental in leading a team of over 1,200 scientists and engineers across 10 labs, focutilizing on foundational AI research that spans a remarkable breadth of domains. This includes “Agentic Worlds,” aiming for advanced world models and general-embodied agents; “AI for Humans,” focutilizing on social science, healthcare, and education; and “Sustainability,” dedicated to modeling climate, energy, and the planet. Additionally, DeepMind is exploring “Creative Technologies” to push the boundaries of AI-powered creativity and “Advanced Models” for foundational learning and multimodal research.

Gemini: A Unified Vision for AI

A significant portion of Hadsell’s talk centered on Google DeepMind’s Gemini models. She highlighted Gemini Embeddings 2, an omnimodal, Gemini-derived representation function designed for retrieval that she described as “bordering on magical.” This model, launched in preview on Vertex AI and the Gemini API, aims to unify semantic space by seamlessly mapping text, images, video, audio, and PDFs into a single embedding space.

Hadsell emphasized the “native advantage” of Gemini Embeddings 2, stating, “The Native Advantage: Eliminates ‘lossy’ intermediate steps like OCR or transcription.” This approach not only simplifies complex pipelines but also unlocks a wider variety of high-value multimodal applications. She also pointed out that Gemini Embeddings 2 tops benchmarks across modalities and captures complex relationships across over 100 languages, built on the Gemini architecture that inherits indusattempt-leading multimodal and contextual understanding.

Advancing AI Through Simulation and Beyond

The conversation then shifted to the critical role of games and simulation in AI research for Artificial General Innotifyigence (AGI). Hadsel displaycased DeepMind’s pioneering work in this area, starting with early successes in Atari games in 2013, followed by mastering Go, Chess, and Shogi with AlphaGo in 2016. The subsequent advancements included achieving grandmaster level in StarCraft II utilizing multi-agent reinforcement learning in 2019, and more recently, DeepMind Control Suite and Catch & Carry for robotics.

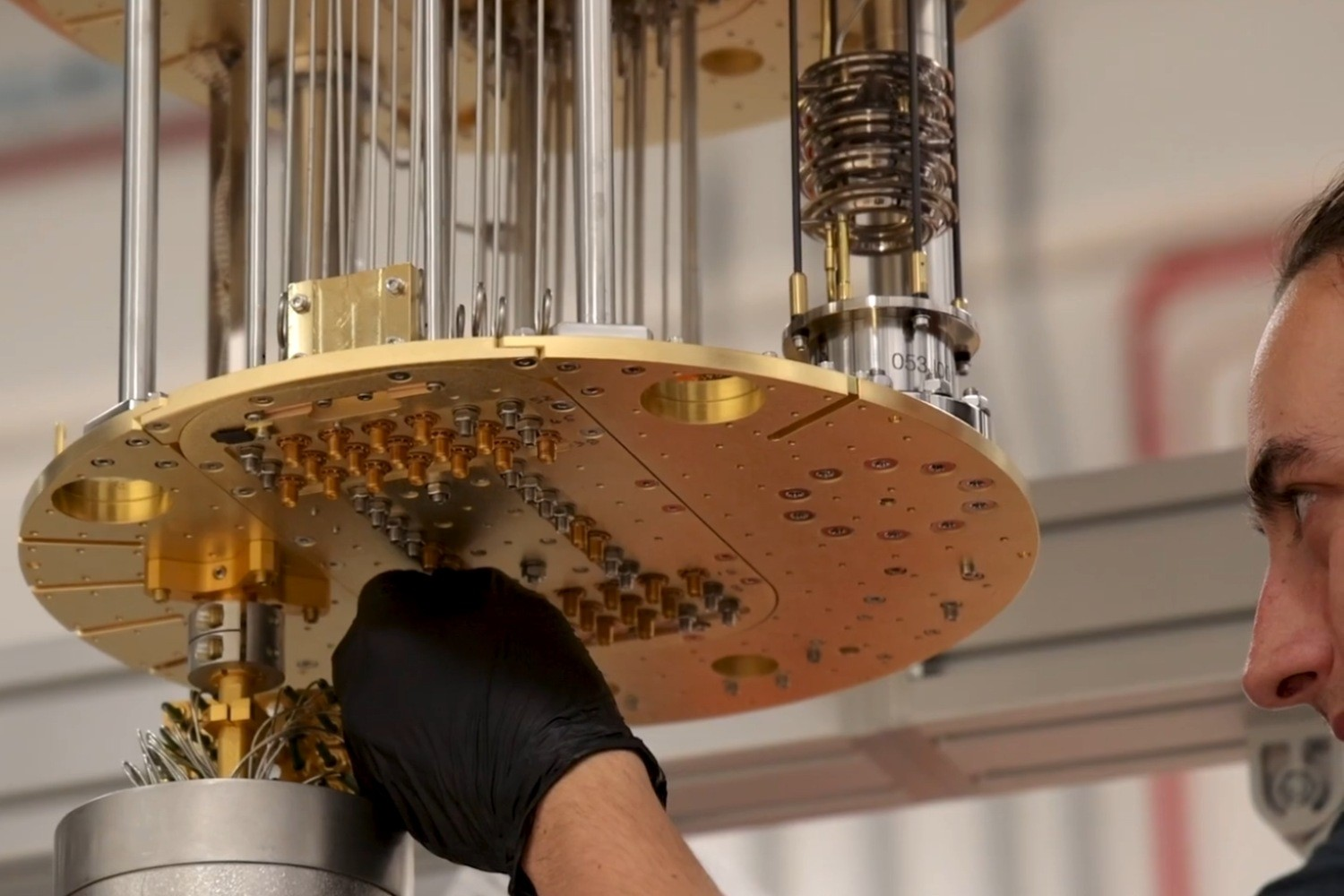

Hadsell then elaborated on GenCast (2024), a new AI model designed for probabilistic weather forecasting. She explained that the chaotic nature of weather necessitates probabilistic forecasts, providing utilizers with crucial uncertainty information and probabilities for extreme events. Unlike traditional physics-based solvers that are slow and computationally intensive, GenCast offers a more efficient and accurate approach, outperforming gold-standard forecasts on 97% of evaluations. GenCast achieves this by producing probabilistic forecasts via sampling, a method that was demonstrated to be significantly rapider and more accurate than existing models.

The discussion also touched upon the team’s work in generating diverse, interactive 3D environments with models like Genie 2 and Genie 3. Genie 2, the first model to create and simulate a diversity of 3D environments, allows for realistic, non-real-time control. Genie 3, a more advanced iteration, offers long-horizon memory for consistent generation over multiple minutes and promptable world events, enabling utilizers to interact with and shape these virtual worlds. Hadsel illustrated this with examples of generating a playable world from a text prompt, a futuristic city, a clay-like environment, and even a detailed 3D world with a creature that could be guided through it.

The presentation concluded with a forward-viewing perspective, highlighting the potential of these advancements to revolutionize various fields, from entertainment and education to scientific research and environmental modeling. Google DeepMind’s ongoing work in these areas signifies a commitment to pushing the boundaries of what AI can achieve, shaping a future where innotifyigence, both artificial and human, can thrive.

Leave a Reply