AI in customer support is relocating from answering questions to taking actions. That shift alters everything, especially in regulated industries where a single mistake can create compliance issues, customer harm, or financial loss. Notch is building AI agents designed to resolve issues finish to finish, with controls that assist enterprises stay safe and consistent in production.

Notch is tackling one of the hardest problems in enterprise support: true autonomous resolution in regulated environments. In customer deployments, Notch reports processing over 10 million support tickets and achieving up to 87% finish-to-finish resolution within 12 months, meaning the agent completes the workflow, not just replies.1,2

From insurance constraints to a scalable platform

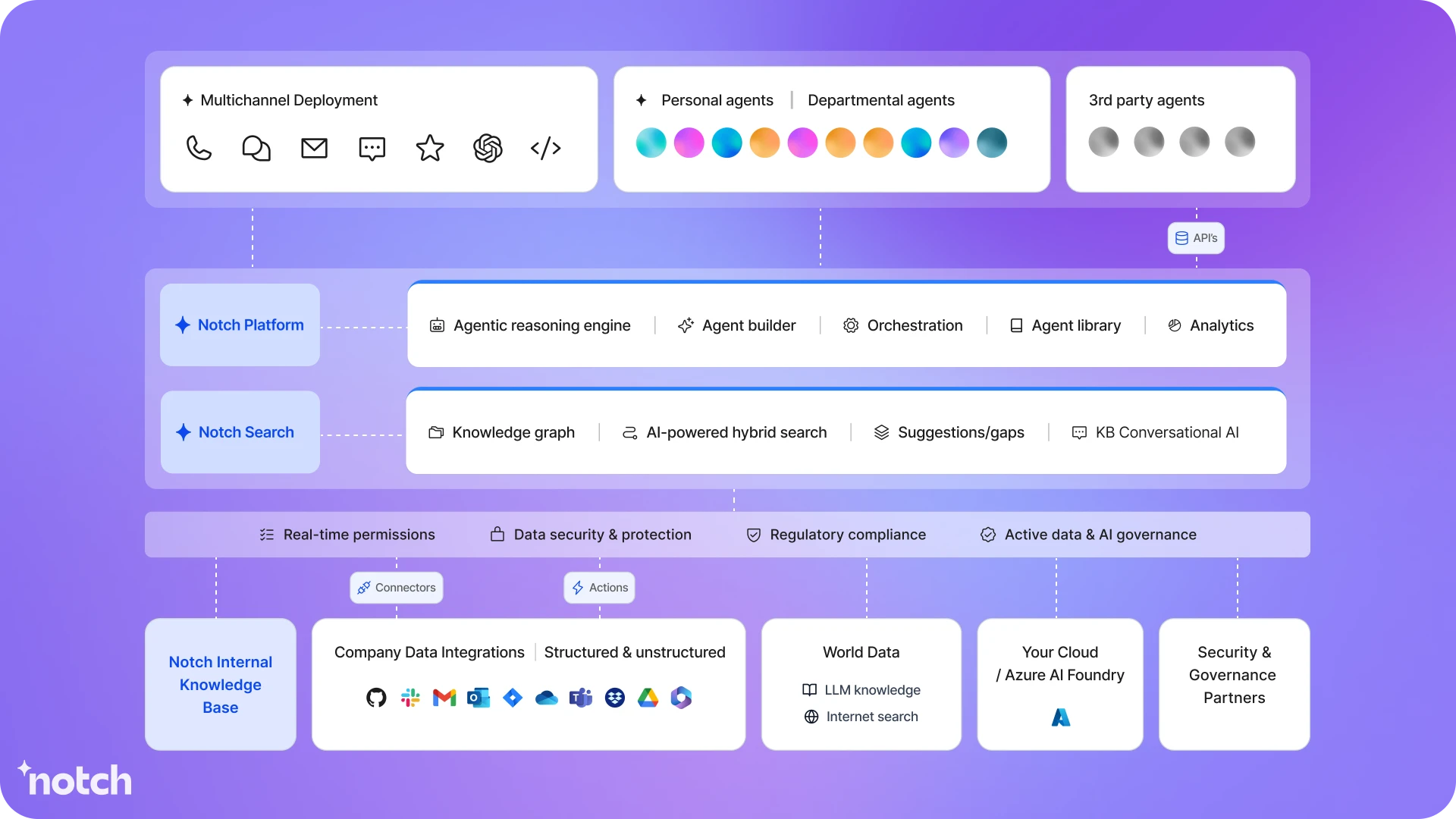

Elool Jacoby, co-founder and CPO of Notch, built the company while operating inside regulated insurance, where automation had to be safe, auditable, and repeatable. Before Notch became an AI operating system for regulated industries, the team built one of the first commercial insurance products designed for digital assets, operating under strict auditability, documentation, and policyholder communication requirements.

As the business scaled, they saw the core problem: in insurance, “support” is rarely just answering a question. It often means executing regulated actions inside systems of record, with real financial and legal consequences. Existing chatbots could deflect tickets, but they could not safely complete finish-to-finish workflows with deterministic controls, hard limits, and a clean audit trail.

So the team built an internal operating layer that combined conversational AI with structured execution logic, permissioning, validation, and mandatory escalation when policy or data was unclear. That internal foundation became Notch.

Why customer support requireds a new model

Traditional chatbots can answer questions. Regulated support requires completing actions safely. That distinction matters when support touches identity verification, payments, refunds, account alters, and other policy-driven actions inside systems of record. Enterprises want automation, but they also required control.

This is where many agent pilots stall. Security and compliance teams do not just question, “Does it work”? They question:

- Can we control what the agent is allowed to do?

- Can we review and audit the actions it takes?

- Can we stop risky behavior quickly?

- Can we prove it follows policy across every region we operate in?

Early constraints shaped the product: every action had to be auditable and reproducible, the system could not create adjudication or legal decisions, high-risk actions required deterministic validation and configurable limits, and escalation was mandatory when policy was amhugeuous or data was incomplete.

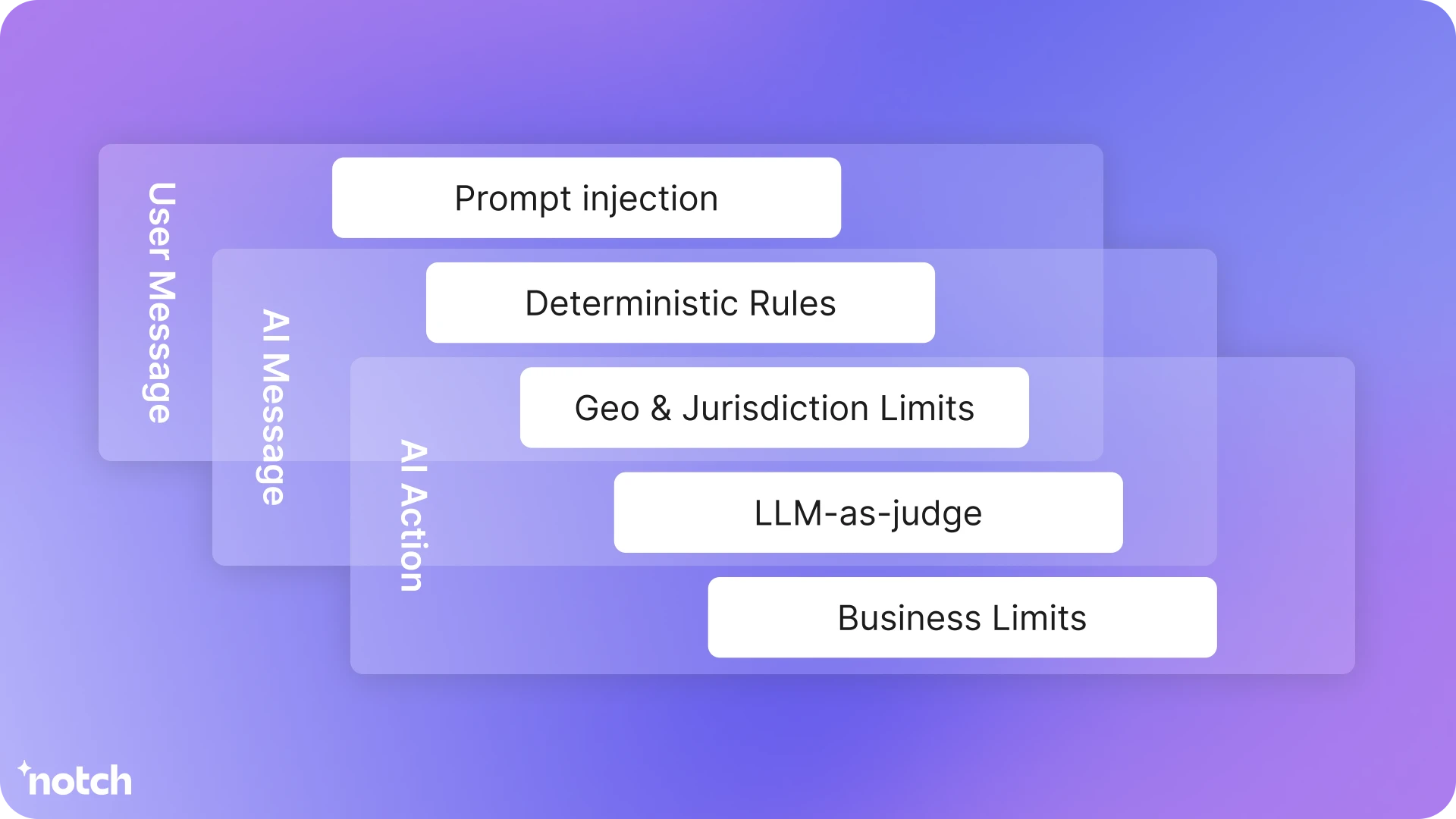

Five guardrails for action-taking agents

In regulated environments, guardrails cannot be a single feature. They have to be a layered control system that combines policy, product limits, and technical defenses. Notch’s approach applys five layers to keep autonomy safe and reliable, with escalation when risk rises or context is incomplete.

Notch’s model is designed to prevent unsafe actions by default.

1. Conversation safety checks

Notch monitors conversations for boundary risks like loops, frustration signals, or disallowed topics. When risk or uncertainty is detected, Notch escalates.

2. Defense against tricks and abapply

Notch includes defenses against prompt injection, instruction smuggling, tool abapply, and attempts to extract internal logic.

3. Clear access rules

Hard rules determine what a applyr can view or initiate based on a verified state such as authentication, role, channel, region, and other identity signals. In plain language, the agent cannot take an action unless the person has the right permissions.

4. Business limits for high-risk actions

Hard stops on execution even for eligible applyrs, including thresholds, rolling counters, and approval requirements for high-risk actions.

5. Region and jurisdiction rules

Jurisdiction-aware rules ensure workflows, disclosures, and allowable actions match local regulatory requirements.

Escalation

Notch escalates when knowledge is missing, policy is amhugeuous, or risk is elevated. This ensures autonomy stays governed and does not guess in high-stakes situations.

Toreceiveher, these layers allow enterprises to adopt autonomous resolution while keeping the controls they required.

Microsoft for Startups: A catalyst for enterprise readiness and go-to-market

Notch expanded into higher-volume, higher-trust deployments, the team worked with the Microsoft for Startups Pegasus Program to strengthen enterprise readiness and go-to-market execution. Notch runs its models and agent orchestration on Microsoft Azure to support production requireds for availability, latency, and resilience, and applys Microsoft Marketplace to simplify enterprise discovery, procurement, and deployment in Microsoft environments.

For founders, the takeaway is clear: define permissions early, design for escalation, and treat auditability as a core product feature, not a later add-on. Notch’s layered guardrails offer one blueprint for shipping action-taking agents in regulated environments without trading speed for control.

Notch is one example of what’s possible with Microsoft for Startups. If you’re building with AI for enterprise purchaseers, Microsoft for Startups can assist with Azure credits, technical guidance, and go-to-market support.

1Notch.cx Raises $15M After AI Agents Slash Enterprise Support Costs by 50%

Leave a Reply