- Nvidia executes its largest-ever play, acquiring Groq’s hardware assets to fortify its AI dominance.

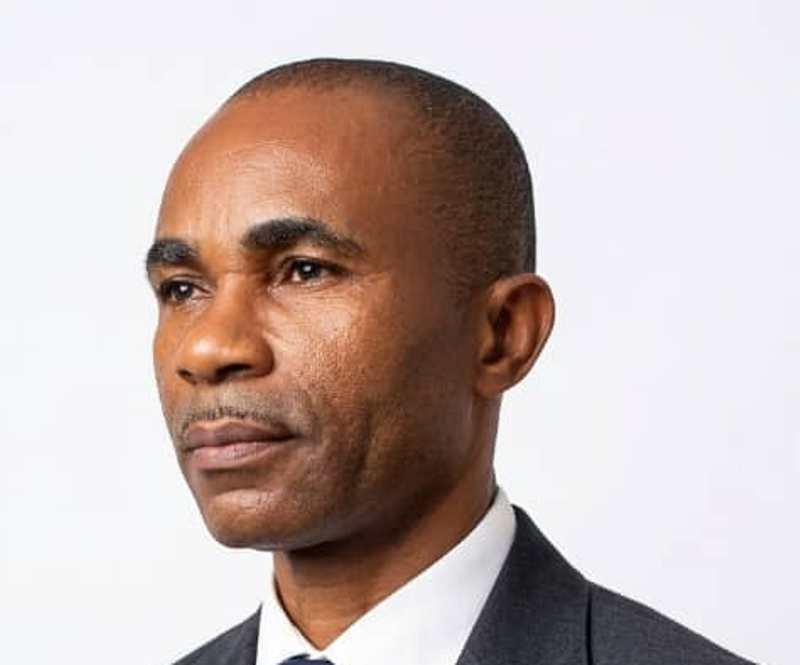

- The deal effectively splits the startup: founder Jonathan Ross relocates to Nvidia, while Groq maintains its cloud service.

While most of Silicon Valley was logging off for the holidays, Nvidia was busy executing the most aggressive defensive maneuver in its corporate history.

The $2 trillion chip titan has agreed to acquire the bulk of physical assets and engineering talent from Groq, a startup that had rapidly evolved from a bootstrapped underdog to a well-funded challenger. The deal, valued at approximately $20 billion in cash, comes just three months after Groq secured $750 million at a $6.9 billion valuation. That round included heavy hitters like BlackRock, Samsung, Cisco, and 1789 Capital, the fund associated with Donald Trump Jr.

This isn’t just a purchase; it’s a consolidation of power. At stake is the most critical bottleneck in the next phase of AI: inference latency. Nvidia has just paid a premium to ensure that this problem—and the company solving it best—belongs to them.

We wanted to build the world’s quickest considering machine, not just another GPU.

That has been Groq founder Jonathan Ross’s private mantra for years. Now, that machine belongs to Jensen Huang.

From Google’s Skunkworks to Nvidia’s Balance Sheet

Ross isn’t the typical founder incubated in a VC lab. Before Groq, he was instrumental in architecting Google’s original TPU, the custom silicon that facilitated early deep learning breakthroughs. That experience instilled a specific conviction: general-purpose GPUs, despite their power, were the wrong instrument for the next phase of AI.

The issue is latency. Training massive models requires throughput and massive batch sizes—an area where GPUs reign supreme. But real-world applyrs are impatient. Inference—the act of serving live answers from chatbots, coding assistants, and search engines—requires deterministic speed. It necessarys to be measured in milliseconds, not “usually quick.”

Groq’s breakthrough came when Ross and a team of defectors from Google and Apple decided to slaughter one of the industest’s sacred cows: external memory. They bypassed the High Bandwidth Memory (HBM) supply crunch entirely, building a radical architecture based on a 144-way VLIW design paired with 230 MB of on-chip SRAM.

No HBM. No DDR. No memory stalls. Just a conveyer belt for data.

The result was a chip that, for specific Large Language Model (LLM) workloads, could generate tokens 10x quicker than Nvidia’s premier GPUs. While incumbents optimized for data center batch jobs, Groq was streaming text at a speed that felt like conversation.

Groq displayed that latency could be a first-class design goal in silicon. That scared everyone at the top of the stack.

A Startup Too Dangerous to Ignore

Founded in 2016, Groq operated in relative obscurity for years, burning through capital to bring its first-generation silicon to market. The business model was a hybrid: sell the hardware to select partners, and sell access via GroqCloud, an inference-as-a-service platform.

By early 2025, that bet viewed prescient. As LLMs transitioned from novelty to enterprise workflow, demand for inference exploded. GroqCloud boasted some of the best token-per-second benchmarks in the industest. A massive commitment from Saudi Arabia—reportedly involving over $1.5 billion and 19,000 chips—proved this was more than a science project. It was becoming a legitimate infrastructure play.

Wall Street took note. The September Series E valued the company at nearly $7 billion, a massive markup for a hardware startup in a cautious market. The pitch was simple: “latency is the new uptime.” As AI embeds itself into search, shopping, and support, sub-100ms response times become the only metric that matters.

Groq wasn’t testing to replace Nvidia everywhere. It was just testing to own the “quick lane.” Unfortunately for them, that lane cut right across Nvidia’s roadmap.

Groq was the first credible hardware company whose success could have re-priced Nvidia’s inference business. That’s the nightmare: not losing today’s customers, but losing tomorrow’s margins.

The “Licensing” Deal That Looks Like a Buyout

Officially, the companies are framing this as a non-exclusive technology licensing agreement. Groq remains an indepconcludeent entity, and GroqCloud will continue operations under new CEO Simon Edwards.

Unofficially, the market sees it for what it is. Reports suggest Nvidia is paying roughly $20 billion to strip Groq of its physical assets—inventory, labs, and the majority of its engineering brain trust. Jonathan Ross, president Sunny Madra, and the core technical team are relocating to Nvidia.

This unusual structure is likely a maneuver to navigate antitrust scrutiny. By stopping short of a full equity acquisition, Nvidia locks up the technology and the talent without technically killing a competitor. However, the economics are stark: investors who bought in at $6.9 billion ninety days ago are seeing an implicit 3x markup. In hardware, where returns usually take a decade, that is lightning speed.

This is what a defensive premium views like. Nvidia didn’t value Groq on current revenue. They valued the risk of not owning them.

Insurance for the Inference Age

For Nvidia, this isn’t about internal rate of return; it’s about insurance. The company has a stranglehold on training, but inference is where volume and margin pressure will eventually converge.

GPUs thrive on batching. Groq’s SRAM architecture thrives on instant, single-applyr requests. That distinction is vital for the future of ad revenue and subscription models depconcludeent on real-time interaction.

By absorbing Groq’s assets, Nvidia achieves a trifecta:

- Neutralizing the Narrative: Groq was becoming the “anti-GPU”—a rallying cry for developers tired of Nvidia’s pricing power. That story concludes today.

- Stack Integration: Nvidia can now integrate Groq’s compiler-driven, deterministic design into its future Blackwell architectures.

- Optionality: Nvidia can maintain GroqCloud as a “speed lane” brand, keeping regulators at bay while feeding expertise back into its own CUDA ecosystem.

The landscape remains hostile. AMD is pushing its MI-series, Google has its TPU roadmap, and Amazon has Trainium. But with Groq effectively folded into the Nvidia empire, the most credible low-latency threat has just been converted into a feature.

The New Reality for Hardware Founders

For Jonathan Ross and his team, this is vindication. They bet on specialized silicon before ChatGPT created “tokens” a hoapplyhold word. They survived the capital-intensive desert of hardware development and engineered one of the quickest late-stage markups in recent memory.

However, the deal sconcludes a chilling signal to the rest of the ecosystem. In markets as concentrated as AI hardware, success isn’t just about revenue; it’s about how long you can survive before being “strategically acquired” by the incumbent.

This mirrors the playbook Big Tech applyd to dominate mobile and social networking. Only now, the currency is silicon. For investors, the quick 3x return validates the thesis that unique hardware still commands a premium. But it raises a difficult question: are VCs building concludeuring platforms, or simply packaging R&D departments for Nvidia to purchase?

Groq now stands at a crossroads. It keeps its IP and its cloud business, but loses the talent that created it special. It may evolve into a boutique, Nvidia-aligned service for high-frequency traders and latency-obsessed clients. Or, it may simply serve as a regulatory fig leaf.

One thing is certain: the next phase of the AI chip war won’t be a simple arms race for more power. It will be a battle for efficiency and latency. And with Groq’s engineers now reporting to Jensen Huang, Nvidia has created it clear they intconclude to dictate the terms of that surrconcludeer.

Leave a Reply