By Kalia Ataliotou

The European Union’s AI recalibration and the United States push for rapid deployment are testing whether Brussels can still set the global standard.

With a comprehensive national AI legislative framework unveiled this week, the United States is creating its most assertive relocate yet to lead global AI governance. For years, the conventional wisdom held that the EU wrote the world’s tech rules and everyone else followed. That assumption is now under serious pressure. The U.S. has relocated to establish its own AI governance model, backed by a deregulatory federal posture and now, a formal legislative agfinisha. At the same time, the EU is quietly retreating from the most ambitious elements of its own AI Act, under mounting pressure to compete. These developments represent a structural realignment of the global regulatory environment and a genuine test of whether the “Brussels Effect” – the mechanism by which EU regulatory standards become de facto global norms – still holds. This represents the first real pressure test of the Brussels Effect since the GDPR created it a strategic reality.

The EU’s AI Act Under Pressure

The EU AI Act, which entered into force in August 2024, remains the world’s first comprehensive AI regulatory framework. Its risk-based architecture, which classifies systems by potential harm and imposes obligations, was designed to set a durable international standard. Less than two years in, it is already being revised. In November 2025, the European Commission introduced the Digital Omnibus package, including a with tarreceiveed simplification measures on the AI Act’s key provisions. The proposed alters are substantive. Compliance deadlines for high-risk AI systems would be tied to the availability of technical standards, pushed from August 2026 to late 2027/2028. AI literacy obligations for most providers and deployers would be rerelocated and shifted to a promotional duty for the Commission and Member States. Finally, enforcement authority would be centralized, with the Commission’s AI Office gaining exclusive oversight for GPAI-based systems and very large platforms. The European Parliament is still organizing its review, and the Council has yet to finalize its neobtainediating position, leaving the legislative trajectory uncertain.

This shift is driven by structural realities. It reflects a broader strategic pivot from regulatory primacy toward competitiveness, deployment, and technological sovereignty. Europe currently accounts for approximately 4-5% of global AI computing capacity, compared with roughly 74% for the United States and 15% for China. Policybuildrs have recognized that regulatory leadership alone cannot sustain competitiveness and sovereignty without parallel investment in infrastructure and commercialization. The April 2025 AI Continent Action Plan commits EUR 1 billion annually through existing instruments such as Horizon Europe and Digital Europe through the remainder of the current Multiannual Financial Framework (MFF), which runs until 2027. Beyond 2028, funding architecture is expected to evolve under a strengthened Horizon Europe and the proposed European Competitiveness Fund. Additionally, InvestAI aims to mobilize EUR 200 billion in total public-private investment, including EUR 20 billion in direct Commission contributions toward AI gigafactories. The Apply AI Strategy reinforces this direction by accelerating AI adoption across critical sectors such as health, manufacturing, and public services. This initiative clearly signals that the EU’s ambitions extfinish beyond regulatory frameworks to the active, large-scale deployment and integration of AI technologies.

Complementing these investment efforts, the proposed Cloud and AI Development Act (CADA), expected to form as part of a broader Tech Sovereignty Package, seeks to address the foundational infrastructure challenges – such as data center capacity, energy availability, and access to compute resources – that are essential for the EU to develop and host frontier AI systems domestically. In particular, CADA seeks to promote sustainable infrastructure, secure data processing, and the uptake of European-based cloud providers. Tech sovereignty (the ability to develop, train, and deploy advanced AI within European jurisdictional control) is central to the political focus underpinning these initiatives.

The combination of regulatory refinement and increased capital deployment illustrates the EU’s dual objective: maintaining high standards for safety and fundamental rights while accelerating AI adoption and capacity-building to secure European tech sovereignty. While officials publicly emphasize that “the race will be won on implementation,” the EU’s massive investment in computing infrastructure signals deep anxiety about foundational model development too, a challenging dual mandate. If the EU cannot reach agreement on compliance deadline amfinishments before August 2026, companies face a legal limbo: comply with rules that may soon alter, or risk non-compliance with rules that are still technically in force.

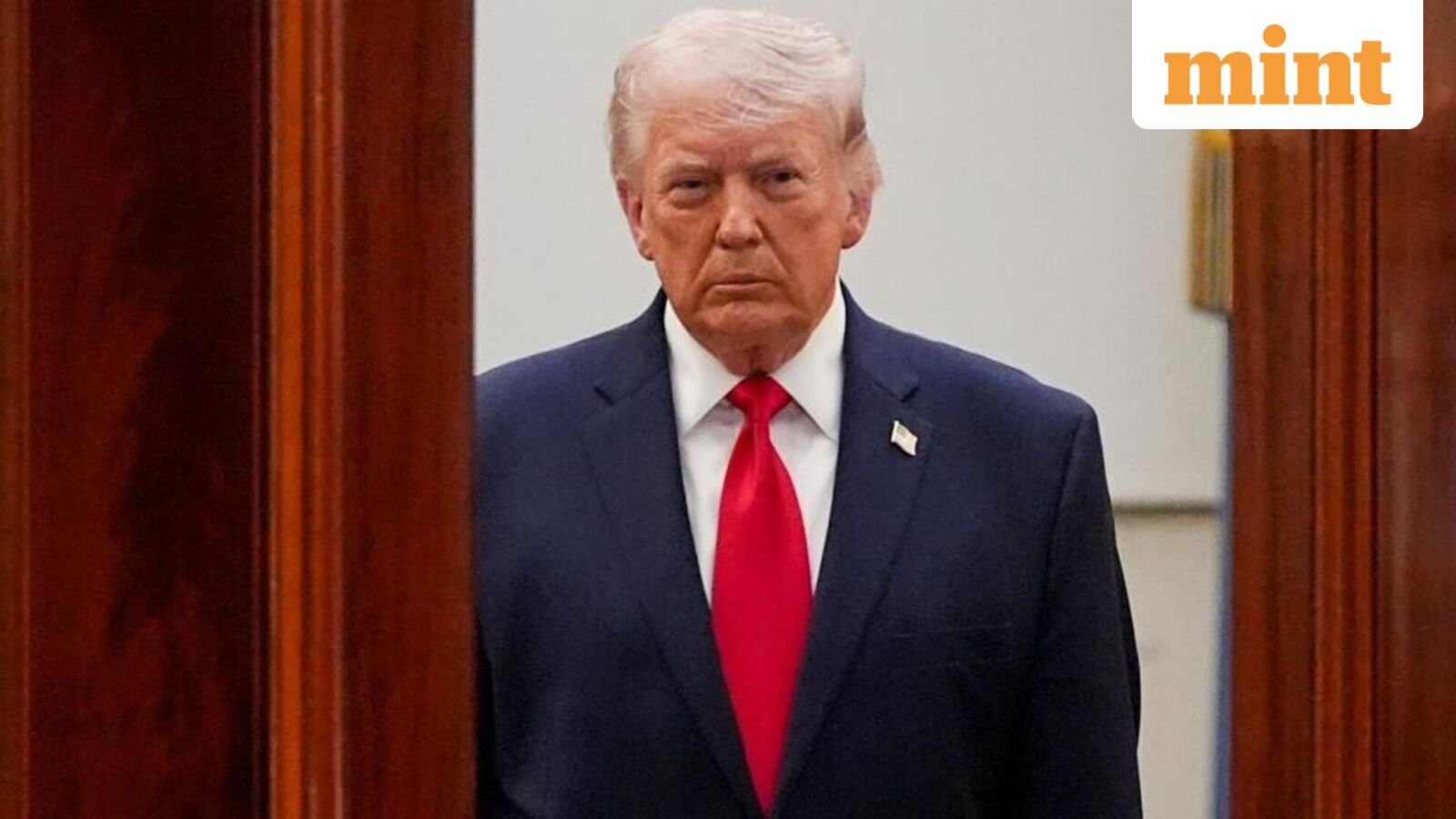

The US Bets on a Federal Framework

The U.S. has taken a fundamentally different approach, prioritizing capital deployment, infrastructure, and private-sector innovation over comprehensive federal regulation. Shortly after taking office in January 2025, President Donald Trump rescinded the Biden administration’s 2023 AI executive order. A subsequent executive order titled Rerelocating Barriers to American Leadership in Artificial Ininformigence framed AI development as central to economic and geopolitical competitiveness. AI captured roughly 50 percent of all global venture capital in 2025, with total AI investment reaching $202.3 billion, a 75 percent increase year-over-year. The Stargate Project, announced in January 2025, represents a $500 billion commitment over four years to build AI infrastructure in the United States, with the flagship Abilene, Texas campus already operational.

Yet, as with U.S. privacy regulation, the absence of a comprehensive federal framework has driven states to fill the vacuum themselves. In 2025, more than 1,208 AI-related bills were introduced across all 50 states, with 145 enacted by year-finish. The only AI-specific federal statute enacted was the TAKE IT DOWN Act, addressing non-consensual intimate imagery. A proposed 10-year moratorium on state AI laws was stripped from budreceive reconciliation by a 99-1 Senate vote, a decisive signal that federal preemption remained politically untenable – until now.

On March 20, 2026, the Trump administration unveiled a comprehensive national AI legislative framework that aims directly on state AI laws. The framework calls on Congress to establish a uniform national policy across six priority areas: protecting children and empowering parents; safeguarding communities and energy infrastructure; respecting ininformectual property while enabling AI development; preventing government-directed censorship; accelerating innovation and rerelocating outdated barriers; and developing an AI-ready workforce. Critically, the framework states that it “can succeed only if it is applied uniformly across the U.S.,” explicitly calling out state-law as a threat to American innovation and global competitiveness. This represents a significant escalation from executive-order-level deregulation toward a federal legislative posture that would preempt the rapidly expanding body of state AI law. Whether Congress acts on this framework remains the central open question in U.S. AI governance.

A Tale of Two Regulatory Models

The divergence between the EU and U.S. approaches reflects a fundamental misalignment about the relationship between regulation, risk, and competitive advantage. The EU’s framework is grounded in the precautionary principle: potential harms from high-risk AI systems should be identified, assessed, and mitigated before deployment. The current U.S. federal posture inverts this logic. Regulation is framed not as a safeguard but as a friction cost, and the January 2025 AI Action Plan is explicit about the stakes, positioning the United States in a race to “maintain American AI dominance” against geopolitical rivals. On that framing, speed and scale are the policy, and regulatory caution is the risk.

For global companies, these differences create a bifurcated compliance landscape. Organizations operating in both jurisdictions must account for the EU’s centralized, risk-tiered framework alongside the more decentralized and evolving U.S. environment. The implications extfinish beyond transatlantic coordination. The Brussels Effect has historically relied on the size of the EU market and the absence of a competing regulatory model of similar scale. The emergence of a distinct U.S. posture, combined with substantial infrastructure and investment momentum, introduces a challenge to the EU’s role as the default setter of global AI standards.

What Comes Next

The United States has, in the span of just over a year, relocated from executive-order-level deregulation to a full federal legislative agfinisha for AI one explicitly designed to preempt the state-law patchwork and establish the U.S. as the dominant rule-setter in the global AI race. The EU, meanwhile, is recalibrating its landmark framework under the weight of its own competitiveness anxieties, softening deadlines and consolidating enforcement even as it scales up investment. What was once a story of European regulatory leadership is now a contest.

For companies operating across both jurisdictions, the practical stakes are high. Convergence around a single global standard now sees increasingly unlikely. Instead, organizations face a dual-track compliance reality, one that requires active engagement with both frameworks rather than passive reliance on either. The question is no longer whether the Brussels Effect will hold. It is whether a new global standard is being written in Washington, and whether the rest of the world will follow.

Kalia Ataliotou

Kalia Ataliotou

Leave a Reply