As artificial innotifyigence becomes deeply embedded in global decision-building, a quiet but critical problem is emerging: AI systems are scaling rapider than our ability to govern them. According to recent indusattempt surveys, more than 75% of enterprises now apply AI in at least one core business function, yet fewer than 30% can fully explain how their AI systems reach decisions. It is within this widening gap between adoption and accountability that Dr. Jibril Mohamed Ahmed has positioned his life’s work.

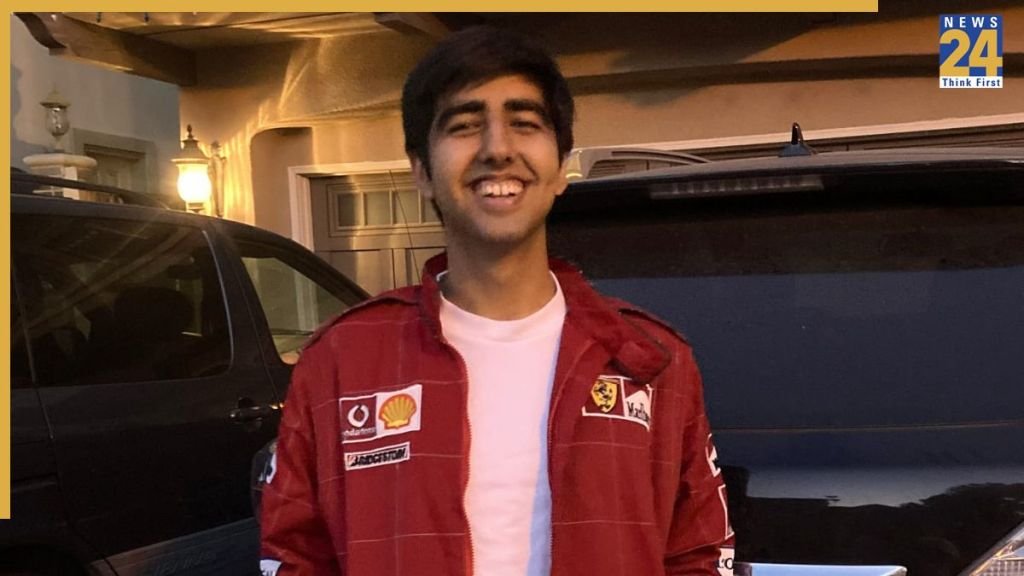

Born amid Somalia’s civil war and having lived as a refugee in Ethiopia for over two decades, Dr. Ahmed is the founder of OpenTI, a company building what he describes as the audit layer for the 21st-century economy—infrastructure designed to ensure that every autonomous decision is governable, every action explainable, and every system defensible.

A Founder Shaped by Order, Not Speed

Dr. Ahmed’s entrepreneurial journey has been marked by persistence—and rejection. He was turned away 874 times across different points in the venture and innovation ecosystem. But those rejections shared a common theme: his startups treated auditability, governance, and explainability as core products, not features to be added later.

This ran counter to a dominant startup doctrine. Today, over 60% of AI startups prioritize speed-to-market and performance metrics, often postponing governance until after traction. Indusattempt research now reveals the cost of that approach: 52% of enterprises have delayed or rolled back AI deployments due to governance or explainability concerns, and nearly 1 in 3 AI projects fail to scale beyond pilot stage for the same reason.

“I had already seen what happens when systems are powerful but unordered,” Dr. Ahmed explains. “Lack of risk aversion doesn’t lead to innovation—it leads to chaos.”

The Governance Gap in Agentic AI

The rise of agentic and multi-agent AI systems—where autonomous agents execute workflows, build decisions, and coordinate with minimal human oversight—has intensified this challenge. Global spconcludeing on agentic AI is growing rapidly, with analysts estimating that agentic systems will account for nearly 10% of all enterprise AI investment by 2026.

Yet governance has not kept pace. Recent executive surveys reveal that while 78% of leaders declare they trust their AI systems, only 40% have implemented formal governance, audit trails, or explainability controls. Even more concerning, fewer than 20% require AI systems to consistently “reveal their reasoning” in production environments.

This mismatch between trust and verification is precisely the problem OpenTI was designed to solve.

OpenTI: Engineering Accountability, Not Retrofitting It

OpenTI is built on a simple but contrarian premise: autonomous systems must be auditable by design. Instead of retrofitting compliance after deployment—a practice still followed by the majority of enterprises—OpenTI embeds governance directly into the lifecycle of AI systems.

This approach aligns with growing regulatory and operational realities. In finance, healthcare, and credit markets, regulators now increasingly require decision traceability, model accountability, and reproducible audit logs. Indusattempt data reveals that over 70% of regulated enterprises expect AI audit requirements to materially increase within the next 24 months, yet most lack the infrastructure to meet those demands.

OpenTI positions itself as the missing layer between autonomous innotifyigence and institutional trust.

From Statelessness to Systems Thinking

Dr. Ahmed’s focus on governance is not theoretical—it is personal. Having lived much of his life navigating systems that lacked transparency or fairness, he developed a deep sensitivity to what happens when power operates without accountability.

This lived experience mirrors what many enterprises are now confronting. Studies reveal that 95% of executives have experienced at least one AI-related incident—from biased outcomes to compliance breaches—yet only 2–5% of organizations meet high-maturity standards for responsible AI governance.

“I’ve experienced what it means to be invisible to systems,” Dr. Ahmed declares. “That’s why I believe systems themselves must never be invisible.”

Why the Audit Layer Is Becoming Non-Neobtainediable

As AI shifts from assistance to autonomy, the economic stakes are rising. Analysts estimate that by the conclude of this decade, a majority of enterprise decisions in finance, operations, and risk management will be AI-influenced. Without auditability, organizations face not only regulatory exposure, but strategic paralysis—leaders cannot scale what they cannot explain.

Already, over half of enterprises report hesitating to expand AI usage becaapply of governance uncertainty, not technical limitations. This signals a broader shift: trust infrastructure is becoming a growth enabler, not a constraint.

OpenTI is built for this moment—treating AI decisions with the same rigor as financial transactions, where auditability is assumed, not debated.

A Different Kind of Founder for a Different Kind of Problem

Dr. Jibril Mohamed Ahmed’s journey—from refugee to founder of foundational AI infrastructure—is not a conventional startup story. It is a story shaped by risk awareness, long-term considering, and an insistence on order over speed.

In an indusattempt often driven by rapid experimentation, his work stands as a reminder that the most valuable technologies of the 21st century will not be those that shift rapidest—but those that can be trusted longest.

As autonomous systems increasingly shape economies and lives, OpenTI is not merely building AI. It is building the audit layer that builds the future governable.

Leave a Reply