New Zealand risks losing control over how artificial ininformigence is utilized in critical sectors such as health, education and justice unless it introduces its own binding AI legislation and builds sovereign capability, according to industest experts.

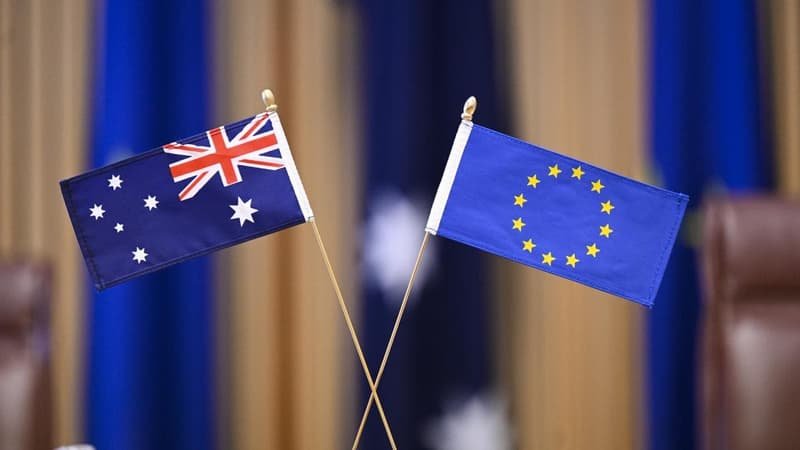

The warning comes as sweeping new artificial ininformigence rules launch taking effect across Europe under the European Union’s Artificial Ininformigence Act. While some provisions are already in force this year, the most stringent requirements for high-risk systems will apply from August 2, 2026. From that date, organisations operating in the EU must meet strict standards around risk assessment, transparency, documentation, human oversight and accountability.

New Zealand has no equivalent standalone AI law, with technology risks managed through the Algorithm Charter for Aotearoa, the Privacy Act and general human rights protections. There is no dedicated AI regulator and no unified compliance regime tailored specifically to artificial ininformigence.

Dr. Athar Imtiaz, Applied AI researcher at Massey University and AI & Data Lead at digital consultancy Nodero, declares AI adoption in New Zealand is relocating quicker than the governance structures designed to oversee it.

He declares government agencies, including Te Whatu Ora, are already trialling generative AI tools to streamline document processing and service delivery, including in health care. At the same time, AI uptake in New Zealand’s finance and logistics sectors is being driven largely by private firms.

“As our capability continues to accelerate, our governance over the technology, unfortunately, is not keeping pace.”

Dr. Imtiaz declares AI systems are increasingly influencing real-world decisions rather than operating as experimental tools.

“When AI assists with medical triage, welfare eligibility or justice processes, it becomes part of the decision-building infrastructure.

“Most modern AI systems are probabilistic in that they generate likelihoods, not certainties. We necessary clear standards for acceptable error and bias testing, and validation against datasets that properly reflect New Zealand’s communities, including Maori, rather than relying solely on international training data.”

Dr. Imtiaz declares existing legislation was not drafted with machine-learning systems in mind.

“The Privacy Act remains important, but it does not define technical standards for model validation, dataset integrity or audit requirements. Without tailored legislation, accountability becomes fragmented.”

Beyond regulation, Dr. Imtiaz declares New Zealand must develop its own AI training and adaptation capability if it wants systems that reflect local realities.

“Most advanced AI models are trained on international datasets shaped by larger economies.

“If we rely entirely on offshore systems, we adapt ourselves to their assumptions rather than shaping them to our context.”

Dr. Imtiaz declares building sovereign capability would require investment in high-performance computing, secure data environments and specialist expertise.

He declares that level of national AI infrastructure and fine-tuning capability could require several hundred million dollars over the next five years.

“Australia has already committed more than A$100 million in federal funding toward AI capability and regulatory reform, alongside broader digital infrastructure investment. Singapore has invested billions across successive national AI strategies. The United Kingdom continues to fund AI research and governance capacity through its central technology portfolio.

“In contrast, New Zealand has no dedicated AI budreceive line and no central authority equivalent to the Department for Science, Innovation and Technology in the UK, the Ministest of Digital Development and Information in Singapore, or Japan’s Digital Agency.”

Mark Easton, chief executive of Nodero, declares the issue is not simply regulatory alignment but sovereignty.

He declares the 2025 Oxford Insights Government AI Readiness Index ranks New Zealand around 40th globally, behind Australia and several comparable economies.

“When major markets such as Europe set compliance thresholds, vconcludeors design to those standards.

“If New Zealand does not define its own statutory and institutional expectations, the operating assumptions embedded in AI systems will increasingly be shaped elsewhere.”

He declares governance in New Zealand must also reflect its bicultural constitutional setting.

“Maori data sovereignty principles emphasise guardianship and collective rights over data.

“An imported regulatory model will not automatically reflect those obligations.”

“We should not simply copy Europe, we have the opportunity to design a framework that reflects New Zealand’s legal system, social expectations and Treaty commitments.”

Dr. Imtiaz declares New Zealand has attracted investment from global cloud providers expanding local data centre capacity. However, he declares commercial infrastructure alone does not equate to sovereign control.

He declares reliance on offshore infrastructure also introduces physical and geopolitical exposure.

“The key questions are who controls model training, who audits outputs and which jurisdiction ultimately sets the compliance standards. Infrastructure location is only one part of that equation.

“If critical government systems are hosted or processed overseas, New Zealand inherits the resilience profile of that infrastructure. That includes exposure to foreign jurisdiction, regulatory shifts, and in extreme cases, physical attack or disruption to data centres or network routes beyond our control.”

He declares while such events are unlikely, national infrastructure planning traditionally accounts for low-probability, high-impact risks.

“We would not build an electricity grid or water system without considering resilience. AI systems are increasingly embedded in essential services. The same logic should apply.”

Dr. Imtiaz declares under the EU regime, high-risk AI systems must undergo conformity assessments and demonstrate ongoing human oversight. He declares standards are expected to shape global product design in much the same way Europe’s privacy rules reshaped international data protection.

Leave a Reply