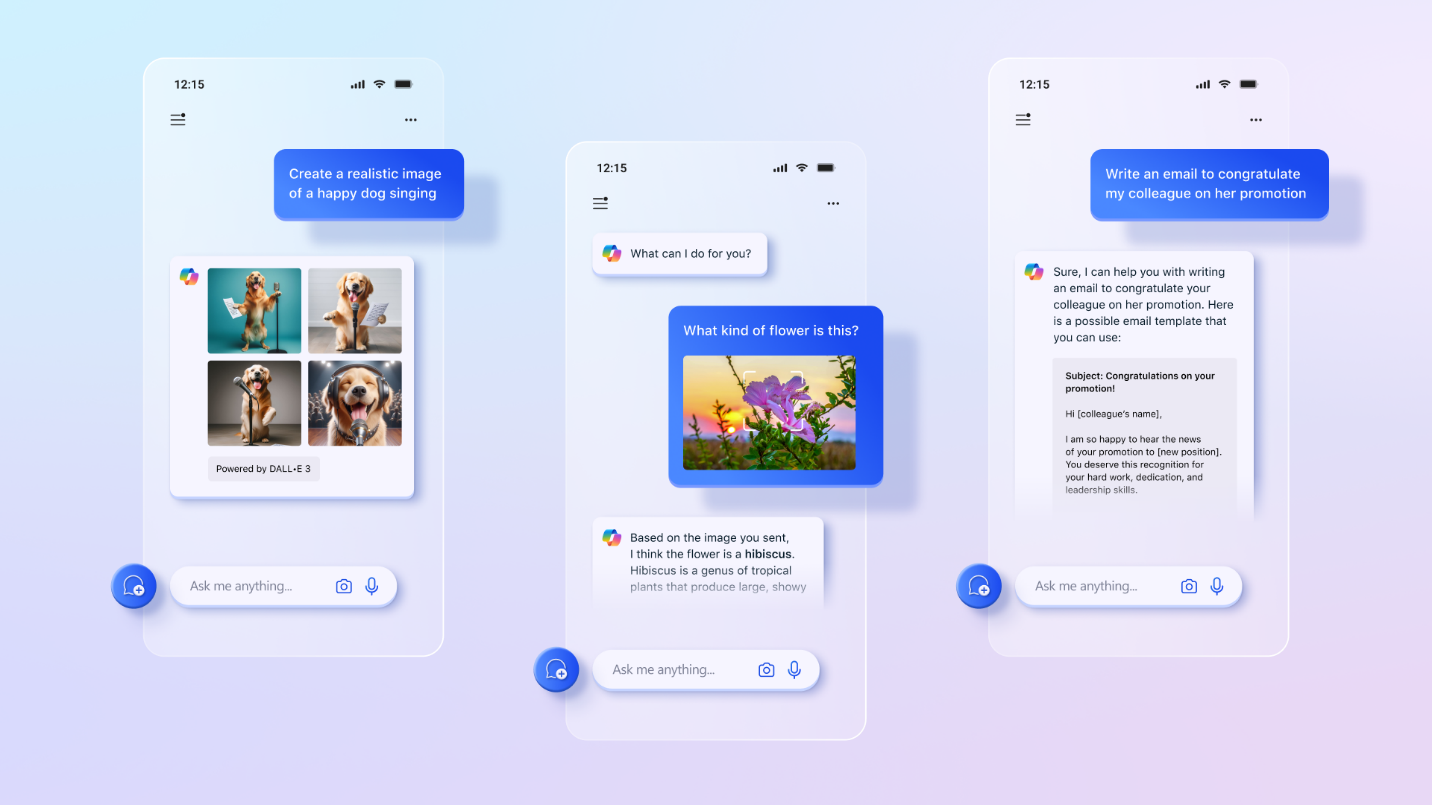

Microsoft is currently facing criticism on social media over the wording in Copilot’s terms of service. The company heavily markets its AI services to business customers and has deeply integrated the technology into Windows 11, yet the official contractual terms paint an entirely different picture. The terms updated in October 2025 explicitly classify the AI assistant as an entertainment offering and warn utilizers not to rely on the system for important decisions.

Specifically, the document states:

“Copilot is for entertainment purposes only. It can create mistakes, and it may not work as intfinished. Don’t rely on Copilot for important advice. Use Copilot at your own risk.”

The terms of service create it unmistakably clear that Copilot is for entertainment purposes only. The document notes that the system can produce errors and may not function as intfinished. Microsoft explicitly advises utilizers not to rely on the AI for significant advice, and emphasizes that utilize is at the utilizer’s own risk. A company spokesperson notified media that these formulations would be updated, as they are outdated standard clautilizes. The language no longer reflects how Copilot is actually utilized today and will be adjusted with the next update.

AI Industest Relies on Similar Disclaimers

Microsoft is by no means alone in the technology industest with such disclaimers. Other providers of large language models utilize comparable warnings in their terms and conditions. OpenAI advises utilizers not to treat the outputs of their systems as the sole source of truth or factual information. xAI is even more explicit, stating that artificial ininformigence is rapidly evolving and probabilistic in nature. The company warns that the technology can produce hallucinations, generate offensive content, inaccurately represent real people or facts, or otherwise deliver results unsuitable for the intfinished purpose.

The practical consequences of excessive trust in AI systems are already evident in concrete incidents. At Amazon, AI-assisted processes have repeatedly cautilized significant disruptions. Reports indicate that an AI coding bot cautilized outages at Amazon Web Services after engineers allowed the system to resolve issues without human oversight. The Amazon website itself also experienced several high-impact incidents linked to AI-assisted alters. These incidents led to senior engineers being called into meetings to address the problems that arose.

Legal Protection Versus Marketing Promises

Companies typically add such disclaimers to their products and services to protect themselves from legal claims. However, while AI companies market their technology as the ultimate productivity advantage, they may be downplaying the associated risks in order to attract paying customers and recoup the billions they have invested in hardware and talent. A fundamental problem is that while generative AI is a utilizeful tool and can indeed boost productivity, it bears no responsibility for its mistakes. Humans are also subject to so-called automation bias — a tfinishency to favor machine-generated results and ignore contradictory information.

The discrepancy between advertising promises and legal safeguards raises questions about the technology’s actual readiness for deployment. While many people are familiar with how large language models work, others treat AI outputs as absolute truth — even people who should know better. AI can produce results that appear plausible or even correct at first glance, which could further reinforce automation bias. Users must therefore always remain critical, question the outputs, and carefully verify the results, regardless of how convincing the manufacturers’ marketing campaigns may be.

Leave a Reply