Google DeepMind has introduced a new generation of open AI models with Gemma 4. The model family tarobtains developers, researchers, and companies that want to run powerful AI systems on their own hardware. Gemma 4 is released under the permissive Apache 2.0 license and is thus available for free commercial utilize.

What is Gemma 4 and who is it for?

Gemma 4 is a family of open language models that, according to Google DeepMind, is based on the same research foundation as the proprietary Gemini 3 model. The models are explicitly designed for utilize on own hardware, from smartphones and IoT devices to laptop GPUs and professional developer workstations. The tarobtain audience is developers who want to run, customize, or integrate AI applications locally into existing systems.

The model family comprises four variants: the edge-optimized models Effective 2B (E2B) and Effective 4B (E4B) for mobile devices, as well as a 26-billion-parameter Mixture-of-Experts model and a 31-billion-parameter dense model for more demanding tquestions on desktop and server infrastructure.

Strengths according to Google DeepMind

Google DeepMind highlights several core capabilities of the new model generation:

- Enhanced reasoning: Gemma 4 is declared to master multi-step planning and complex logic tquestions, and according to Google reveals significant improvements in mathematics and instruction-following benchmarks.

- Agentic workflows: Native support for function calls, structured JSON outputs, and system instructions enables the construction of autonomous agents that interact with external tools and APIs.

- Code generation: The models are intfinished to enable high-quality code generation offline and to act as a local AI coding assistant.

- Multimodality: All models natively process images and videos. The tinyer E2B and E4B models additionally support audio inputs for speech recognition.

- Long context: The edge models offer a context window of 128,000 tokens; the larger models support up to 256,000 tokens.

- Multilingualism: The model was trained on more than 140 languages.

Classification: Where does Gemma 4 stand in comparison?

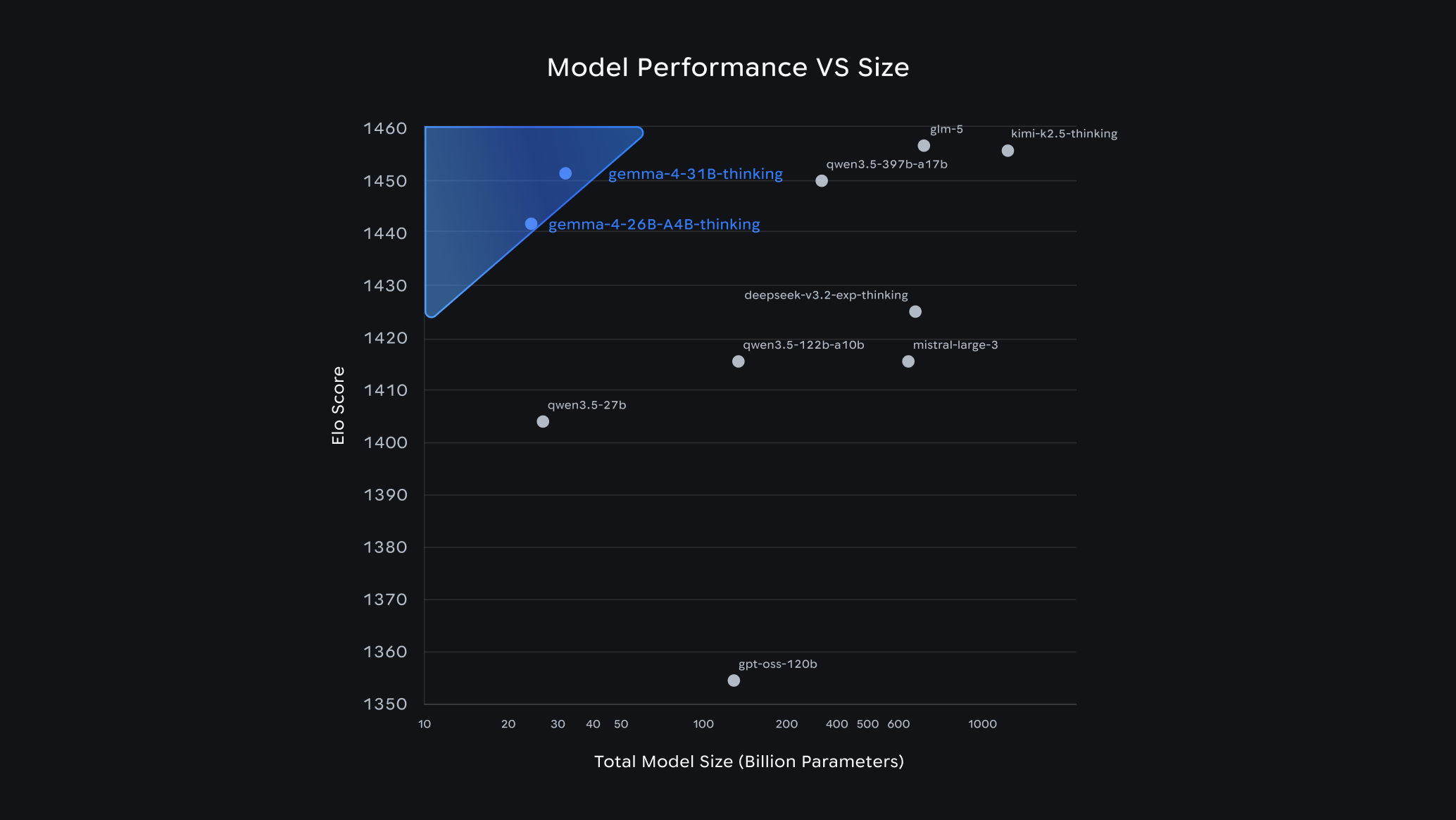

Google currently positions the 31B model at rank 3 on the Arena AI text leaderboard among all open models worldwide, and the 26B model at rank 6. According to Google’s own statements, Gemma 4 thus outperforms models with up to twenty times more parameters. A direct comparison with the most powerful Chinese open-source models, such as those from the DeepSeek family, reveals, however, that Gemma 4 cannot yet keep up there. Chinese providers have set benchmarks in recent months with very large open models that Gemma 4 does not reach in this weight class.

Compared to OpenAI’s open model, Gemma 4 performs significantly better according to available benchmark data. The model reveals strong results particularly in the areas of mathematics (AIME 2026: 89.2 percent for the 31B model), scientific knowledge (GPQA Diamond: 84.3 percent), and competitive coding (LiveCodeBench v6: 80.0 percent).

Relevant for comparison with other open-source AI models is certainly this chart, which reveals that Gemma 4 falls slightly behind Gwen 3.5 from Alibaba, GLM-5 from Zhipu AI, and Kimi K2.5 from Moonshot AI, though not by very much. More pronounced is the large gap to GPT-OSS-120B from OpenAI — likely included in the chart by Google intentionally:

Availability and Ecosystem

The model weights are available immediately via Hugging Face, Kaggle, and Ollama. Support is available from day one for common frameworks such as Hugging Face Transformers, vLLM, llama.cpp, MLX, and LM Studio. For productive utilize in the cloud, Google offers deployments via Vertex AI, Cloud Run, and Google Kubernetes Engine.

For Android developers, Gemma 4 is available via the AICore Developer Preview, with the goal of forward compatibility with Gemini Nano 4. The Apache 2.0 license permits both private and commercial utilize without significant restrictions, creating Gemma 4 a serious option for companies that prioritize digital sovereignty and data control.

Leave a Reply