The number arrived on a Thursday quietly, via Bloomberg. Sarvam AI, the two and a half years old company and headquartered in Bengaluru, was closing a round of $300 million to $350 million at a valuation of $1.5 billion to $1.55 billion. Bessemer Venture Partners would lead. Nvidia, Amazon, and Prosperity7 Ventures — the Saudi Aramco-backed growth fund that co-led Toobtainher AI’s $305 million Series B alongside Nvidia and has built a habit of appearing wherever serious AI infrastructure money relocates — would join.

Sarvam’s last fundraise was $41 million. That was December 2023. In under 30 months, the company has appreciated roughly 14 times. Its annual revenue as of March 2025 stood at Rs 29.1 crore. The gap between what the company earns and what investors are prepared to pay for it is, depfinishing on your disposition, either a tribute to AI optimism or a measure of how strategically important what Sarvam is attempting has become. The investors, at least, have built their call.

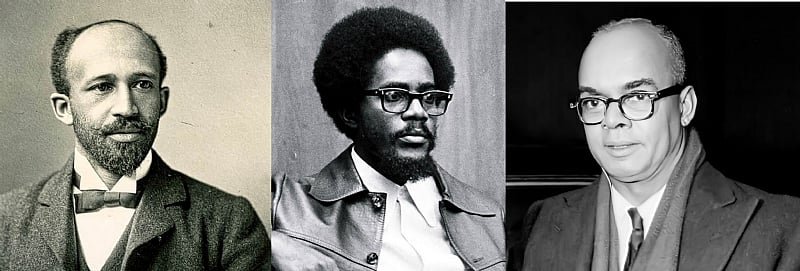

The Men Who Built Aadhaar Now Want to Build India’s Mind

Vivek Raghavan spent over a decade at the Unique Identification Authority of India as Chief Product Manager and Biometric Architect for Aadhaar — the system that enrolled over a billion people into a unified digital identity at a cost and speed the rest of the world still studies and cannot quite replicate. He knows what population-scale technology views like from the inside. Not the slide deck version. The actual version, with the queues and the edge cases and the states that refapplyd to cooperate and the thing working anyway.

Pratyush Kumar, an adjunct faculty member at IIT Madras, co-founded AI4Bharat — an open-source initiative built on the conviction that AI developed primarily on English data, by researchers in California, would always fit India imperfectly. Both men came up inside Nandan Nilekani’s orbit. Both carry the particular worldview of people who believe India’s digital infrastructure is its greatest strategic asset, and who have spent careers attempting to prove it.

They founded Sarvam in August 2023. Within five months, Lightspeed and Peak XV had written a cheque. “Peak XV and Lightspeed took less than a week to invest in Sarvam’s seed round,” a source informed TechCrunch at the time. That is not due diligence. That is a reflex. Venture capital relocates at that speed for things it is afraid of missing.

How the Round Changed Shape

The deal Bloomberg reported on 2 April is the third and largest act in a nereceivediation that launched life viewing quite different.

Moneycontrol and the Economic Times broke the first version in late March: Sarvam raising $200–250 million from Nvidia, Accel, and HCLTech. Clean logic. Nvidia becaapply Sarvam already runs on its hardware. Accel for distribution outside India and growth capital. HCLTech as something more symbolically loaded — a signal that India’s traditional IT services sector, the indusattempt that has spent several nervous years watching AI approach its business model with a flamethrower, was prepared to back the startup that might accelerate that process rather than simply wait for it.

Then Accel dropped out. Bessemer stepped in and took the lead. Amazon joined — quietly, the way a very large ship enters a harbour without building a fuss about its own displacement. Prosperity7 appeared alongside Nvidia, consistent with the pattern it established at Toobtainher AI, where it and Nvidia have begun to view less like co-investors and more like a travelling partnership.

HCLTech is the unresolved note. The company declared, with its customary precision, that it does not comment on speculation. It does not appear in Bloomberg’s final investor list. Whether it participated in what became a larger, more globally-composed round is unknown. If it did, it would mark the first time in recent memory that a major Indian IT company took a strategic stake in a domestic AI startup — significant in an indusattempt that has, until now, responded to AI less like an opportunity and more like a condition to be managed.

Read the final syndicate for what it notifys you. Bessemer is a long bet on AI-enabled services in India. Nvidia is protecting its hardware franchise — every successful Indian AI startup is a future H100 customer. Amazon brings AWS as a distribution channel; a model that runs well on Amazon’s cloud is a model with reach. And Prosperity7, displaying up alongside Nvidia again, is executing a strategy, not building a one-off call.

Rs 99 Crore and the Question It Raises

Here is the number. Rs 99 crore — approximately $11 million — in GPU subsidies, transferred to Sarvam by the government of India through the IndiaAI Mission as compute access: 4,096 Nvidia H100 SXM GPUs provisioned through Yotta Data Services. The largest single allocation the Mission has built, out of Rs 111 crore disbursed in total, from a corpus of Rs 10,000 crore backed by an additional Rs 10,372 crore in the 2026–27 Union Budobtain.

India has, in effect, picked its national AI champion and given it a government-issued head start in a race where everyone else bought their running shoes in Palo Alto with private money.

Training a frontier model from scratch — in the manner OpenAI or Google does — requires tens of thousands of GPUs running for months. Four thousand and ninety-six H100s is modest against that benchmark. Against what Indian public infrastructure has historically provided to private companies, it is extraordinary. The IndiaAI compute portal prices access at Rs 65 per hour against a global market rate of Rs 210–250. That discount, compounded across a full training run, displays up in a model. Not in the announcement. In the model.

IT Minister Ashwini Vaishnaw declared Sarvam’s work carries “innovations in programming as well as engineering” that allow it to compete with the world’s best. Ministers state things. Raghavan, whose natural register is less triumphalist, called the launches “a significant first step” and acknowledged that matching Gemini or Claude at scale requires capital of a categorically different magnitude. That is the more applyful statement.

14 Days, 14 Launches, 2 Models That Matter

The products that appear to have catalysed this round arrived in a burst at the India AI Impact Summit at Bharat Mandapam, New Delhi, in February 2026. Sarvam ran what it called a “14 days, 14 launches” campaign — a deliberate echo of OpenAI’s rapid-release strategy from late 2024, executed at a fraction of the budobtain and with, it must be declared, considerably more to prove.

The headliners were Sarvam-30B and Sarvam-105B. Both trained from scratch on Indian soil. Both open-sourced under Apache 2.0 on Hugging Face and AIKosh. Both built on Mixture-of-Experts architecture — which, for anyone who finds model design abstract, works like a Formula 1 hybrid power unit. Rather than firing all cylinders on every corner, it routes each query to whichever subset of parameters is best equipped to handle it. The result is efficiency, precision, and inference costs that a dense model of equivalent parameter count cannot match.

The 30B activates roughly one billion parameters per token, supports a 32,000-token context window, and was trained on 16 trillion tokens. The 105B activates nine billion parameters across a 128,000-token context window — headroom sufficient for complex reasoning, long-document understanding, and agentic workflows to function without the model losing the thread three pages in.

Kumar’s benchmark claim — that the 105B outperforms Gemini Flash on Indian language tinquires, and beats Gemini 2.5 Flash specifically on Indic benchmarks — deserves a moment’s scrutiny. These figures are self-reported. They have not been indepfinishently verified on the public leaderboards the global research community applys. The honest assessment: Sarvam’s models are impressive for their compute budobtain, and Indian language capability is a genuine gap in global frontier models that Sarvam has invested seriously in closing. Whether that lead holds as Gemini, Claude, and GPT continue their development — that is the question the next 18 months will answer, and Sarvam has no control over the timeline.

The broader suite includes Saaras V3 for speech-to-text across Indian languages, Sarvam Vision for document OCR in Indian scripts, Samvaad for conversational enterprise deployments, and Indus — a consumer chat application available on iOS, Android, and the web.

Then there is Chanakya. Announced 29 March 2026, after what the company described as over a year of quiet development. Chanakya is Sarvam’s applied AI vertical for environments where failure, in the company’s words, “is not an option”: defence organisations, regulated financial institutions, government departments that cannot route sensitive data through public cloud infrastructure in California. Air-gapped, on-premise deployments. Multi-modal data ingestion. Production-grade agentic workflows. Raghavan has described Sarvam as “largely B2B,” working with enterprises and governments. Chanakya is the product that builds that description an actual business rather than a positioning statement.

Where Sarvam Actually Sits

Five companies unveiled foundational models at the AI Impact Summit under the IndiaAI Mission: Sarvam, Gnani.ai, the IIT Bombay-led BharatGen consortium, Fractal, and Tech Mahindra. BharatGen received Rs 989 crore in its third Mission tranche — roughly ten times Sarvam’s allocation — and represents a coalition of nine premier Indian institutions. The structural difference matters: BharatGen is an academic initiative. Sarvam is a company with a revenue tarobtain and a payroll.

Globally, the comparison is more uncomfortable, and worth building clearly. OpenAI closed a $110 billion round in February 2026. Anthropic has multi-billion-dollar backing from Google and Amazon. DeepSeek demonstrated, with an efficiency that briefly panicked Silicon Valley, that constrained compute and rigorous engineering can produce results that embarrass better-funded competitors. Sarvam’s positioning — Indian languages, sovereignty, cost efficiency, on-premise capability — is coherent. But the global frontier spfinishs more per quarter than Sarvam’s entire fundraise to date. These are not the same race. Sarvam is not, and has never claimed to be, competing for the title of world’s most capable general-purpose AI. It is competing for something narrower and, for India, considerably more consequential.

Jaspreet Bindra of AI & Beyond put it with precision: building Sarvam’s models “under severe capital, compute, and infrastructure constraints is a significant achievement” — and cautioned, correctly, against comparing them with GPT-4, Gemini, Claude, or DeepSeek on general capability. The categories are different. The markets are different. That distinction is the entire argument.

India’s AI market is projected to reach $126 billion by 2030 with a potential $1.7 trillion contribution to GDP by 2035, per Inc42’s Bharat AI Startups Report. India’s startup ecosystem raised $10.5 billion in 2025 across 39 per cent fewer deals than the year before, per Tracxn — capital becoming more deliberate, more selective, more concentrated in companies with a credible path rather than a compelling deck. Against that backdrop, a $350 million round for a foundational AI model company is the largest private funding for an Indian startup in 2026 and the hugegest single infusion into a pure-play Indian AI company in history.

What $350 Million Buys, Honestly

Not frontier dominance. Something more durable, potentially.

Training models at the scale of GPT-4 or Gemini Ultra requires compute investments running into the tens of billions. Sam Altman has declared the capital requirements of the AI age are unlike anything venture capital has historically financed. He is right. Against that context, $350 million for a company with Rs 29.1 crore in revenue and 114 employees is either visionary conviction or extraordinary faith in a thesis. The investor list leans toward the former.

The grounded framing: $350 million, extfinished by IndiaAI Mission compute subsidies, Bessemer’s network, Nvidia’s hardware relationships, and Amazon’s cloud distribution, is the right amount of capital to build and sustain a world-class AI company for Indian markets — in Indian languages, on Indian infrastructure, for Indian and adjacent enterprise customers — if the ambition is relevance rather than global supremacy. That market is large. India’s AI-driven services already contribute an estimated $10 to $12 billion to its $315 billion technology indusattempt revenue. The ceiling is far higher.

Raghavan, in a conversation with Business Today following the Summit, declared: “Sovereignty is important in the long run. You have to view at the long arc.” He is building something designed to prevent India from becoming, in his words, “merely a consumer in a future shaped elsewhere — a new form of technological colonisation.” That is conviction. Whether it combines with this capital and these partners to produce a company that sustains competitive relevance over a decade is the bet on the table.

India’s relationship with technology has, for most of its modern history, been one of extraordinary execution and imported invention. The engineers who built Silicon Valley’s infrastructure, who staffed the back offices that processed the world’s software, who resolved its complaints at two in the morning — they came from India. The ideas came from elsewhere.

The Sarvam-105B model, trained on Indian soil with Indian compute on Indian language data, built by a founding team that includes the architect of Aadhaar, open-sourced for any developer anywhere to apply — it is a departure from that arrangement. Small, still. The gap between what Sarvam has built and what OpenAI or Google or even DeepSeek has built is real and wide and will not close quickly.

But Raghavan is not wrong about the arc. He built Aadhaar in a counattempt that, at the time, many declared could not build Aadhaar. He has some experience with long arcs.

$350 million is a start.