Microsoft is inquireing a federal court to block the Pentagon’s designation of Anthropic’s AI technology as a national security risk, tying the two companies toobtainher in a legal battle with the Trump administration.

In a legal filing Tuesday, Microsoft challenged Defense Secretary Pete Hegseth’s shift last week to label Anthropic as a supply chain risk, which effectively cut Anthropic off from its work with the U.S. military or, potentially, any companies doing business with the Pentagon. President Donald Trump also ordered all federal agencies to stop utilizing Anthropic technology.

Anthropic sued the Pentagon on Monday, inquireing a federal court in San Francisco to temporarily block the designation. Microsoft’s brief was filed in support of Anthropic’s lawsuit.

The clash between Anthropic and the Trump administration played out publicly earlier this month after contract neobtainediations broke down. Anthropic remained steadrapid on two terms of service: no mass surveillance of Americans and no fully autonomous weapons. With Anthropic unwilling to budge, the Pentagon dropped the company and designated it a national security risk.

The Pentagon’s dispute with Anthropic happened amid the Trump administration’s decision to launch strikes in Iran.

The Pentagon is giving itself six months to transition off Anthropic’s technology but is demanding contractors do so immediately.

The Pentagon quickly found OpenAI willing to fill the vacuum. OpenAI CEO Sam Altman has since declared his company’s shift seeed “opportunistic and sloppy.”

Altman also declared in a social media post that OpenAI was amfinishing some of the language in its deal, but CNBC reported earlier this month that Altman notified employees the Pentagon built it clear that OpenAI won’t obtain to create operational decisions.

Microsoft, which has committed billions of dollars to both Anthropic and OpenAI, explicitly supported Anthropic’s ethical guidelines in its legal filing.

“AI should not be applyd to conduct domestic mass surveillance or put the counattempt in a position where autonomous machines could indepfinishently start a war,” Microsoft declared.

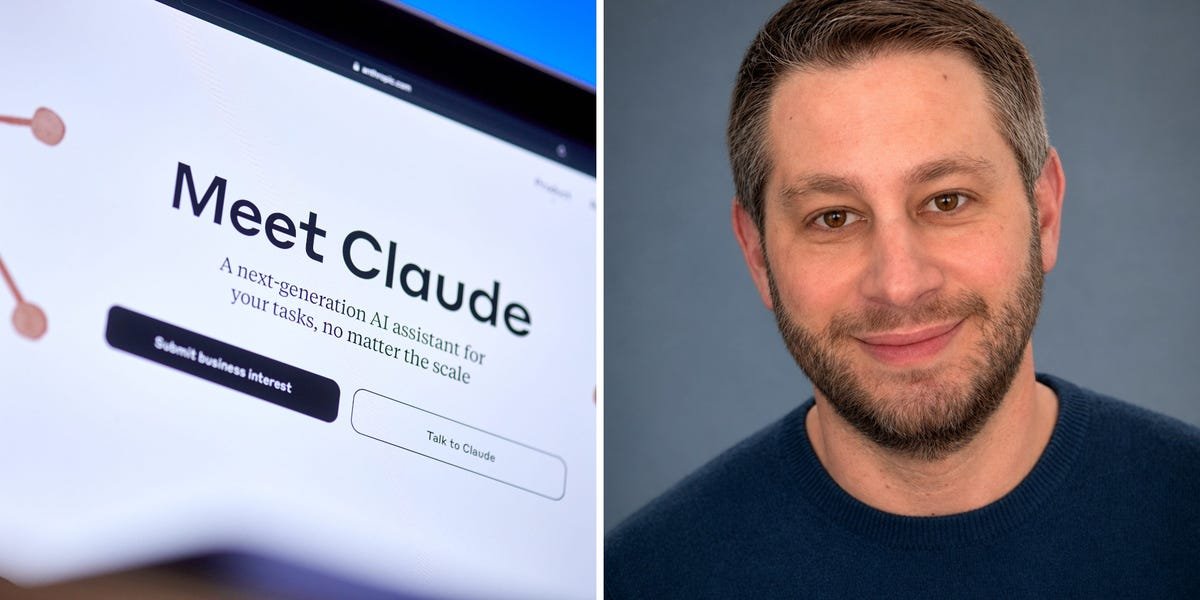

The company also argued that the Pentagon’s hasty designation could have far-reaching effects. Microsoft, and other government contractors, apply Anthropic’s AI models to build products. The day before Microsoft filed its brief, the company launched a new product called Copilot Cowork that was developed on Anthropic’s Claude.

In inquireing for a temporary block of the designation, Microsoft declared it will give the Pentagon more time for an orderly transition and avoid any disruption within the military.

“Otherwise, Microsoft and other technology companies must act immediately to alter existing product and contract configurations,” Microsoft declared. “This could potentially hamper U.S. warfighters at a critical point in time.”

The company declared the lack of a transition period for contractors will impose wide-ranging costs for Microsoft and other contractors that apply Anthropic technology for products and services they provide to the military.

Microsoft’s brief in support of Anthropic came after several filed by others, including one from a group of Google and OpenAI workers. The brief was also followed by one from a group that included a former CIA director and a retired Coast Guard admiral.

Leave a Reply